How Claude Code Helped Us Get 1,000+ Waitlist Signups in 2 Months

A new domain, zero backlinks, and 1,000+ waitlist signups for CreatorFlow — mostly from AI search engines. Here's the Claude Code content workflow behind it, with real data.

Key Takeaways

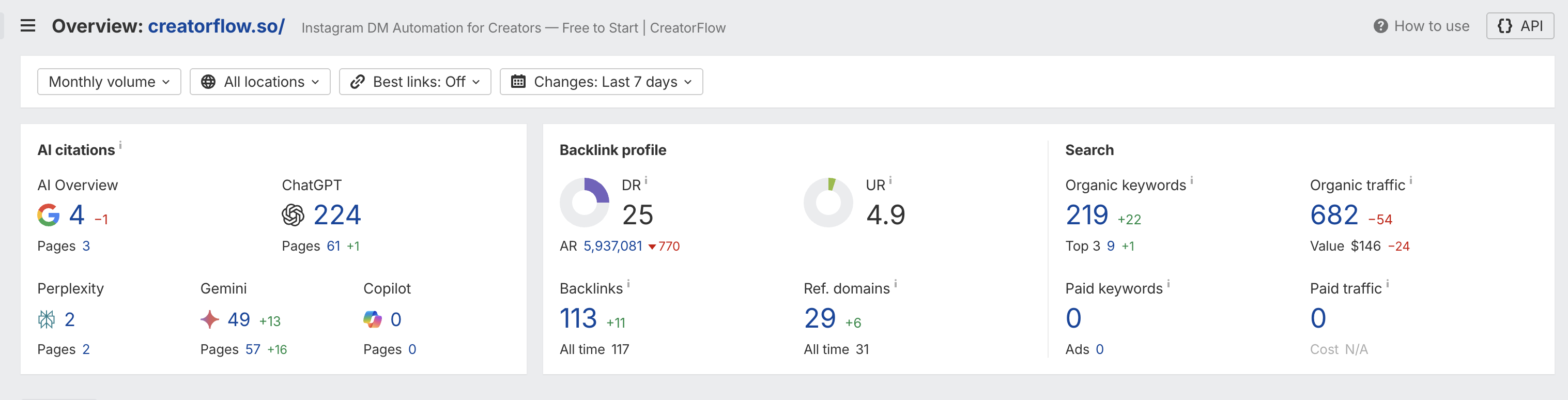

- 1,000+ waitlist signups in 2 months on a brand-new domain (creatorflow.so), with the majority of referral traffic coming from AI search engines like ChatGPT, Gemini, and Perplexity

- 224 ChatGPT citations and 49 Gemini citations within 90 days, tracked via Ahrefs AI citation monitoring

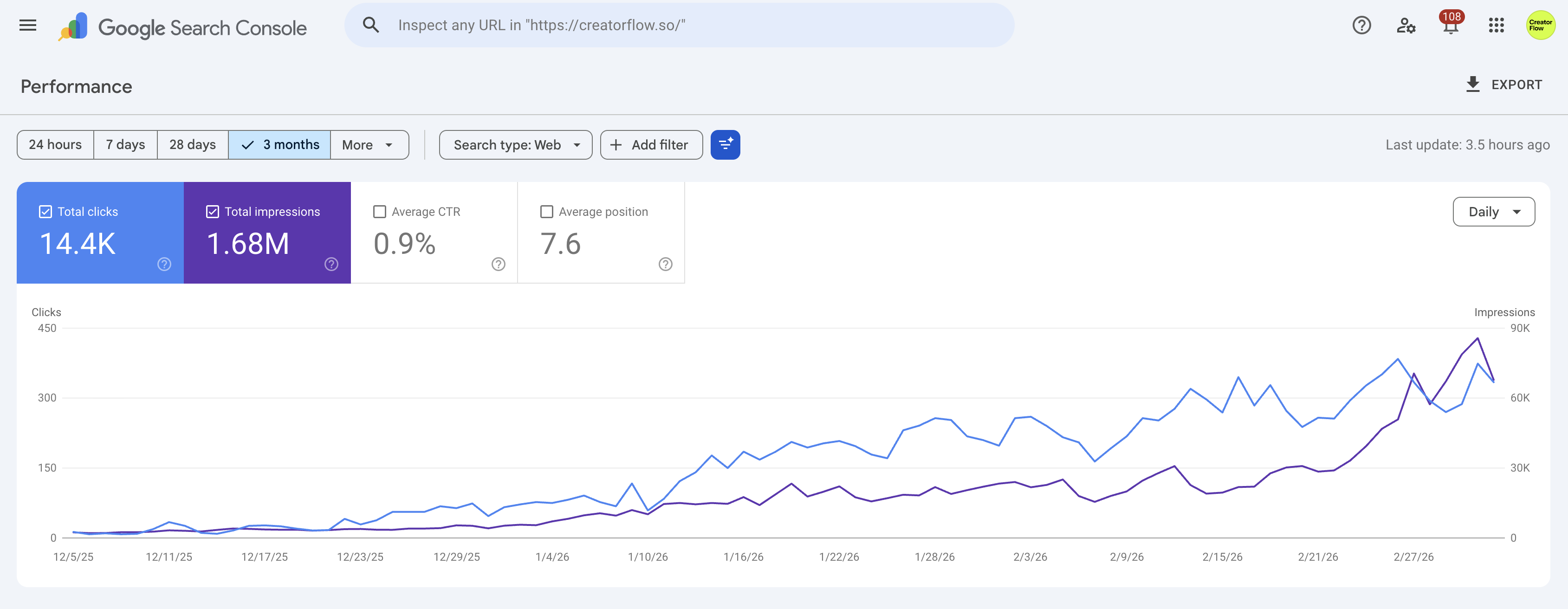

- 14.4K organic clicks and 1.68M impressions in Google Search Console over the same period, despite a DR of only 25

- Claude Code was not a magic bullet. It was a content accelerator that let a solo founder produce citation-ready content at a pace that would otherwise require a team

- AI slop prevention was built into the workflow through strict CLAUDE.md rules: banned phrases, mandatory source verification, and structured formatting requirements

- Results are solid for a new domain with no paid ads, but context matters: this is early traction, not a hockey stick

The Setup: New Domain, Solo Founder, No Ad Budget

CreatorFlow is an Instagram DM automation tool that launched on a fresh domain in December 2025. No existing audience. No domain authority. No paid acquisition budget.

The hypothesis was straightforward: if AI search engines (ChatGPT, Perplexity, Gemini, Google AI Overviews) are becoming a primary discovery channel, then content optimized for AI citation could drive early traction faster than traditional SEO alone on a new domain.

Claude Code was the production engine. Not because it writes content for you, but because it lets a single person execute a content strategy that hits both traditional SEO and AEO (Answer Engine Optimization) requirements simultaneously.

Two months later, the waitlist crossed 1,000 signups. Here's what the data looks like and what principles drove it.

The Numbers: Honest Assessment

Let's look at the actual data before drawing conclusions.

AI Citation Performance (Ahrefs, March 2026)

| Metric | Value | Context |

|---|---|---|

| ChatGPT citations | 224 | Across 61 pages |

| Gemini citations | 49 | Across 57 pages |

| Google AI Overviews | 4 | Across 3 pages |

| Perplexity citations | 2 | Across 2 pages |

| Domain Rating | 25 | New domain, 2 months old |

| Referring domains | 29 | All time: 31 |

| Organic keywords | 219 | Top 3 positions: 9 |

| Organic traffic | 682 | Monthly estimated visits |

Google Search Console (2-Month View)

| Metric | Value |

|---|---|

| Total clicks | 14.4K |

| Total impressions | 1.68M |

| Average CTR | 0.9% |

| Average position | 7.6 |

Are These Results Good?

Context is everything. For a 3-month-old domain with DR 25, no paid traffic, and no pre-existing audience, these numbers are strong but not extraordinary.

What's genuinely impressive: 224 ChatGPT citations on a brand-new domain. Most established sites with DR 50+ struggle to get cited by AI search engines. Getting cited early means AI models are training on CreatorFlow content as a reference, which compounds over time.

What's modest: 682 monthly organic traffic is low in absolute terms. A 0.9% CTR suggests rankings are still mostly in positions 5-10 (the data confirms an average position of 7.6). The domain needs more time to build authority.

What's promising: The growth curve in GSC is consistently upward. Impressions hit 1.68M in 2 months, meaning the content is indexing and appearing in results, even if clicks haven't fully caught up yet. That gap typically closes as the domain matures.

The 1,000+ waitlist signups came primarily from AI search referrals, not organic search. This is the key insight: AI engines cited CreatorFlow content, users asked follow-up questions about the tool, and those conversations converted to waitlist visits.

The Claude Code Content Workflow

Claude Code didn't write articles and publish them. It operated within a structured system that enforced quality at every stage. Here's how the workflow functioned.

CLAUDE.md: The Editorial Rulebook

Every Claude Code project has a CLAUDE.md file that acts as persistent instructions. For content production, this file contained rules that shaped every piece of output. A few principles worth sharing:

Banned language list. A specific list of phrases Claude Code was not allowed to use in any copy. This is the single most effective anti-slop measure. Examples from the rulebook:

Banned phrases (partial list):

- Em-dashes

- Filler words: just, very, actually, basically

- Corporate speak: leverage, robust, scalable, streamline

- AI clichés: "No X. No Y. Just Z." / "game-changer" / "supercharge"

- Hedging: "It's worth noting" / "You may want to consider"

- Pattern: "It's not just about X. It's about Y."

Without this list, AI-generated content defaults to the same cadence and vocabulary that readers (and AI engines evaluating source quality) have learned to recognize as machine output.

Mandatory citation protocol. Every external claim required a source with a URL and date. The rule was explicit: if a claim cannot be verified via web search, remove it, reframe it, or mark it for manual research. No fabricated statistics. No "studies show" without the study.

Citation format enforced:

"81% of SEOs prioritize AI/GEO ([Lumar Survey](URL), March 2026)"

If a claim cannot be verified:

1. Remove the claim, OR

2. Reframe: "Many SEOs report...", OR

3. Mark [NEEDS VERIFICATION] for manual research

Structured answer formatting. Every H2 section started with a 50-70 word "citation block" written in third-person factual tone. This pattern exists specifically for AI extraction. AI search engines pull short, definitive paragraphs as source material. If your content opens every section with a direct answer, you're giving AI engines what they need to cite you.

Paragraph and sentence constraints. Maximum 2-3 sentences per paragraph. No sentences over 30 words. Active voice default. One idea per paragraph. These aren't style preferences; they're readability constraints that keep Flesch-Kincaid scores in the 8-10 range where content performs best for both human readers and AI extraction.

The Article Production Process

A typical article went through this sequence:

- Keyword and intent research. Identify what people search for and what AI engines currently answer poorly on the topic

- Claude Code drafts the structure. H1, H2s, H3s, with citation blocks under each heading. The CLAUDE.md rules constrain output format automatically

- Source verification pass. Claude Code searches the web for every claim and attaches citations. Anything unverifiable gets flagged or removed

- Human editorial review. Read every paragraph. Cut what sounds like AI filler. Add personal experience and proprietary data that only a human would have

- AEO optimization. Add FAQ sections targeting People Also Ask queries. Include "Summarize with AI" buttons that pre-fill prompts mentioning the brand

- Schema markup. Article + FAQ + Breadcrumb JSON-LD on every post

The human step is non-negotiable. Claude Code handles structure, research, and formatting at speed. But the editorial voice, the proprietary insights, and the "does this sound like a person wrote it" filter require a human in the loop.

Speed Advantage: What Claude Code Changes

Without Claude Code, producing one article that meets both SEO and AEO standards takes a solo creator 6-8 hours: research, outlining, writing, source verification, formatting, schema markup, internal linking.

With Claude Code and a well-configured CLAUDE.md, the same article takes 2-3 hours. The tool handles the scaffolding. You handle the thinking.

That 3-4 hour savings per article means a solo founder can publish 3-4 optimized articles per week instead of 1-2. Over 12 weeks, that's the difference between 15 articles and 45. Volume matters for AI citation because more indexed pages means more surface area for AI engines to discover and reference.

Why AI Search Engines Cited a New Domain

A DR 25 domain with 29 referring domains shouldn't get 224 ChatGPT citations. Traditional SEO logic says authority takes years to build. But AI search engines don't rank the same way Google's organic algorithm does.

AI engines evaluate content based on:

- Direct answer quality. Does the content answer the question in a self-contained paragraph? Citation blocks are built for this

- Entity clarity. Is the product, company, or concept defined clearly and consistently? CreatorFlow was always described the same way across every page: "CreatorFlow is an Instagram DM automation tool"

- Recency and freshness. AI engines favor current content. Every article carried an

updatedDatein frontmatter and cited 2025-2026 sources - Structured data. FAQ schema, Article schema, and Organization schema gave AI crawlers machine-readable context about what each page covers

The content wasn't competing for Google's top 3 blue links (only 9 keywords reached top 3). It was competing for AI citation slots, where the evaluation criteria are different and a new domain with structured, well-cited content can win.

Anti-Slop Measures That Made the Difference

AI slop is the biggest risk of using AI tools for content. If every article reads like ChatGPT wrote it, you've created volume without value. Here's what prevented that.

The Banned Language Filter

The CLAUDE.md banned language list catches the most obvious AI patterns. But it goes deeper than avoiding "leverage" and "streamline." The list targets structural patterns that AI defaults to:

- "No fluff. No theory. Just results." (the false tricolon)

- "Enter: [thing]" (the dramatic introduction)

- "And here's the kicker" (the fake spontaneity)

- Arrow formatting for lists

- Short question hooks: "The best part?" / "Want access?"

When you ban these patterns, Claude Code has to find more natural ways to transition between ideas. The output reads differently from the thousands of AI-generated articles that all share the same rhetorical DNA.

Human-Only Content Layers

Certain types of content were never delegated to Claude Code:

- Proprietary data. The screenshots in this article, the waitlist numbers, the specific conversion observations. Only a human has access to this

- Contrarian opinions. "Are these results good?" requires judgment, not information retrieval

- Product experience. How the tool feels to use, what surprised us, what didn't work as expected

These layers are what make content defensible. Any competitor can use Claude Code to generate an article about Instagram DM automation. Nobody else has CreatorFlow's specific GSC data or Ahrefs citations to reference.

Source-First Writing

The CLAUDE.md enforced a "lead with data, not opinions" rule. Every section had to open with a verifiable claim or a specific number. This discipline prevented the vague, opinion-heavy content that AI tools produce when given open-ended prompts.

Compare:

- Weak: "AI search is becoming more important for businesses."

- Strong: "ChatGPT cited CreatorFlow 224 times across 61 pages within 90 days of the domain launching, despite a DR of only 25."

The second version is citation-ready. An AI engine can extract it, attribute it, and present it as evidence. The first version is filler that no engine would bother citing.

What You Can Take From This

This isn't a playbook that guarantees 1,000 signups. The results depended on timing (AI search is still early, less competition for citations), niche (Instagram DM automation has clear search intent), and consistency (publishing multiple times per week for 12 straight weeks).

What's transferable is the method:

1. Build Your CLAUDE.md as an Editorial System

Don't use Claude Code with default settings. Write explicit rules for:

- Banned phrases and patterns (prevents AI voice)

- Citation requirements (prevents fabrication)

- Structural formatting (enables AI extraction)

- Paragraph and sentence length limits (ensures readability)

The CLAUDE.md file is the difference between "AI-assisted content" and "AI slop with a human name on it."

2. Optimize for Citation, Not Position

On a new domain, you won't outrank established sites for head terms. But you can get cited by AI engines if your content:

- Opens every section with a direct, extractable answer

- Defines entities clearly and consistently

- Uses structured data (FAQ, Article, Organization schema)

- Cites current sources with dates

3. Keep Humans in the Loop

Claude Code is a production accelerator. It handles structure, research scaffolding, source verification, formatting, and schema markup. The human handles editorial judgment, proprietary data, voice, and the "does this pass the smell test" filter.

The moment you remove the human from the loop, you lose the defensibility that makes content worth citing.

4. Measure AI Citations, Not Organic Traffic

For a new domain, organic traffic takes 6-12 months to compound. AI citations can happen within weeks if the content quality is high. Track both, but understand that the waitlist growth came from AI referrals first, organic search second.

Tools like Ahrefs now track AI citations from ChatGPT, Gemini, Perplexity, and Google AI Overviews. Add this to your reporting alongside traditional rank tracking.

CC for SEO Command Center

Pre-built Claude Code skills for technical audits, keyword clustering, and GSC/GA4 analysis.

Be the first to get access

Disclosure: What Claude Code Didn't Do

Transparency matters. Here's what Claude Code was not responsible for:

- Product-market fit. CreatorFlow solves a real problem (Instagram creators spending hours on manual DM replies). No amount of content fixes a product nobody wants

- Landing page conversion. The waitlist conversion rate depended on copy, design, and offer clarity — all human decisions

- Distribution beyond search. Some signups came from Reddit, direct outreach, and word-of-mouth that had nothing to do with AI-generated content

- Backlink acquisition. The 29 referring domains came from genuine relationships and content worth linking to, not automated outreach

Claude Code made content production 2-3x faster with higher structural quality. It didn't replace strategy, product thinking, or distribution work.

FAQ

How many articles did you publish in 2 months?

CreatorFlow published approximately 40 articles over 12 weeks, averaging 3-4 per week. Each article targeted a specific keyword cluster and followed the AEO-optimized structure described in this case study.

Does Claude Code write the entire article?

No. Claude Code produces a structured draft with headings, citation blocks, sourced claims, and proper formatting. A human then edits for voice, adds proprietary data and opinions, cuts anything that reads like AI filler, and makes final publishing decisions. The tool handles roughly 60% of the production work; the remaining 40% is editorial and strategic.

Can AI search citations drive real signups?

Yes. When ChatGPT or Perplexity cites your content in response to a user question, that user is already in a research or buying mindset. CreatorFlow's waitlist signups from AI search had higher intent than typical organic traffic because the user was actively asking about Instagram DM automation solutions.

What tools track AI search citations?

Ahrefs added AI citation tracking in late 2025, covering ChatGPT, Gemini, Google AI Overviews, Perplexity, and Copilot. Other tools like Otterly.ai and Peec AI offer similar monitoring. No tool captures every AI mention, so cross-referencing multiple sources gives the most complete picture.

Is a DR 25 domain too weak to compete?

For traditional organic search, DR 25 limits your competitiveness on high-volume head terms. For AI citations, domain authority matters less than content structure, answer quality, and entity clarity. A new domain with well-structured, well-cited content can earn AI citations that DR 60+ sites miss because their content isn't formatted for extraction.

How much does this approach cost?

Claude Code pricing is usage-based through Anthropic (approximately $100-200/month for heavy content production). Ahrefs for citation tracking starts at $129/month. The total tooling cost is under $350/month, which is significantly less than hiring a content writer or SEO specialist. The time investment is 2-3 hours per article for the human editorial layer.

Founder, CC for SEO

Martech PM & SEO automation builder. Bridges marketing, product, and engineering teams. Builds CC for SEO to help SEO professionals automate workflows with Claude Code.

Read these next

AI Visibility Tools for SEO: SaaS Platforms vs Claude Code Workflows

Compare 9 AI visibility platforms (Peec AI, Scrunch, Semrush) against building your own monitoring with Claude Code. Honest breakdown of when to buy vs build.

Technical SEOAutomate Technical SEO Audits with Claude Code

Run crawl-based audits, detect broken schemas, analyze server logs, and fix internal linking issues using Claude Code. Built for technical SEOs.

Data AnalysisB2B SaaS SEO: Connect Rankings to Pipeline

Map organic rankings to pipeline revenue using Claude Code. Combine GSC data with GA4 conversions and CRM exports to prove SEO's business impact.

Automate Your SEO Workflows

Pre-built Claude Code skills for technical audits, keyword clustering, content optimization, and GSC/GA4 analysis.